Face Detection and Face Following

I’m excited to introduce a newly added feature: face detection and tracking.

Before the gridded video is drawn for the first time, the system scans through each input video track and locates the faces at 100 equally spaced intervals and saves this information.

When the gridded video is being rendered, the face location in each frame of each track is smoothly interpolated. The system will automatically crop, pan, scale each face to best fit in the available grid space. In addition, sub-pixel cropping is used on the original input video to make very slow panning/zooming smoother.

The goal is to see the performers better, use screen space more efficiently, and not create any noticable distractions in the proces.

Original layout strategy, no cropping/zooming and gridding strategy

had left over blank space at the end:

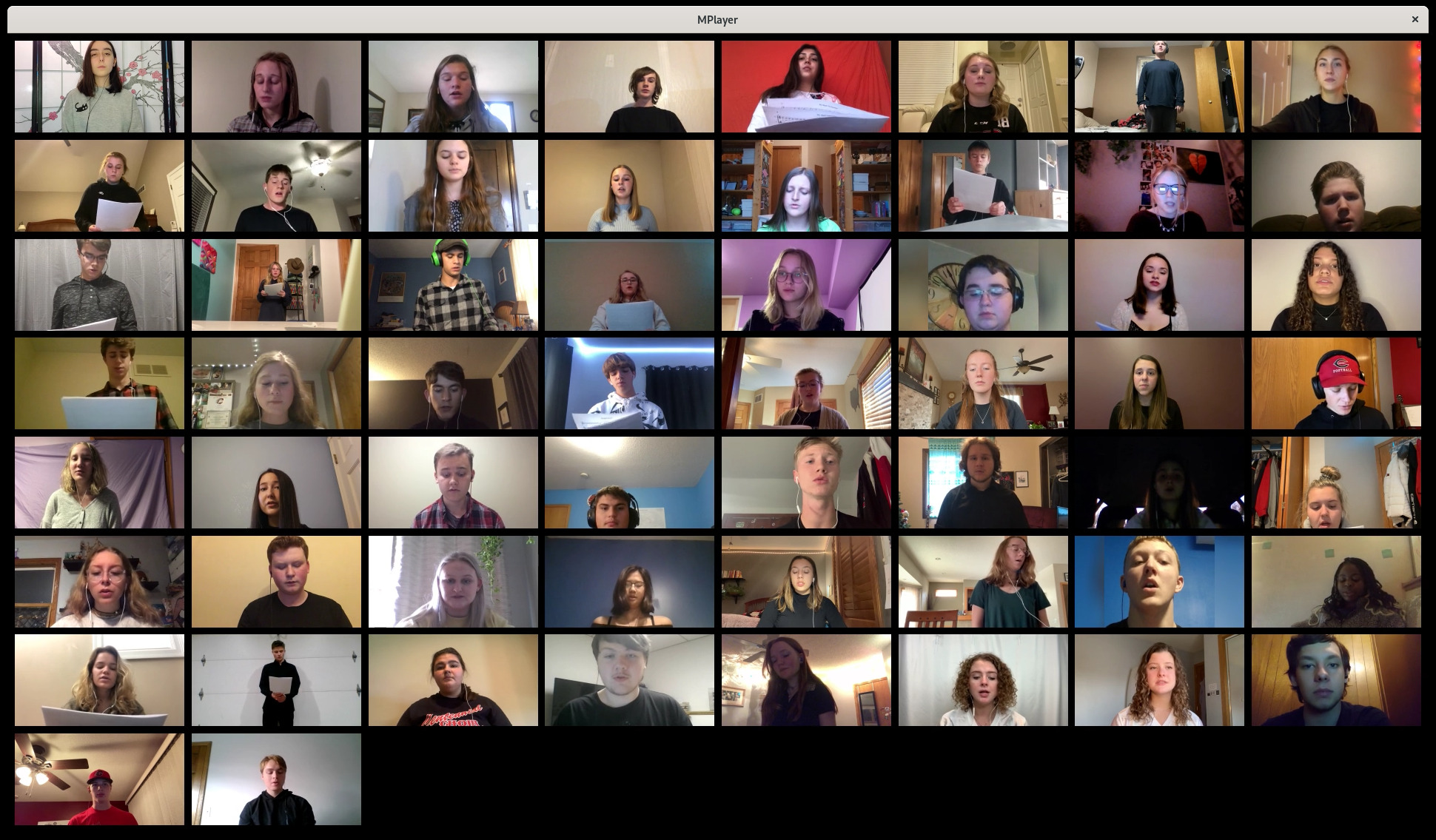

Updated layout strategy with face detection, zooming, cropping, and

better grid planning to minimize wasted space and give the best view

of the performers faces as possible:

(Credit: Centennial High School Concdert Choir singing We Shall Over Come, arranged by Robert Schlidt, Director.)